The Question

When an executive talks about a topic on an earnings call, does their voice carry information that the transcript alone misses? Pace, pauses, pitch, hesitation, researchers know these signals are there but have struggled to extract them at scale. Dr. Dre has terabytes of corporate audio recordings going back to 2005, and a research question that needs a working pipeline to answer. The pipeline does not exist yet. That is the project.

What Students Build

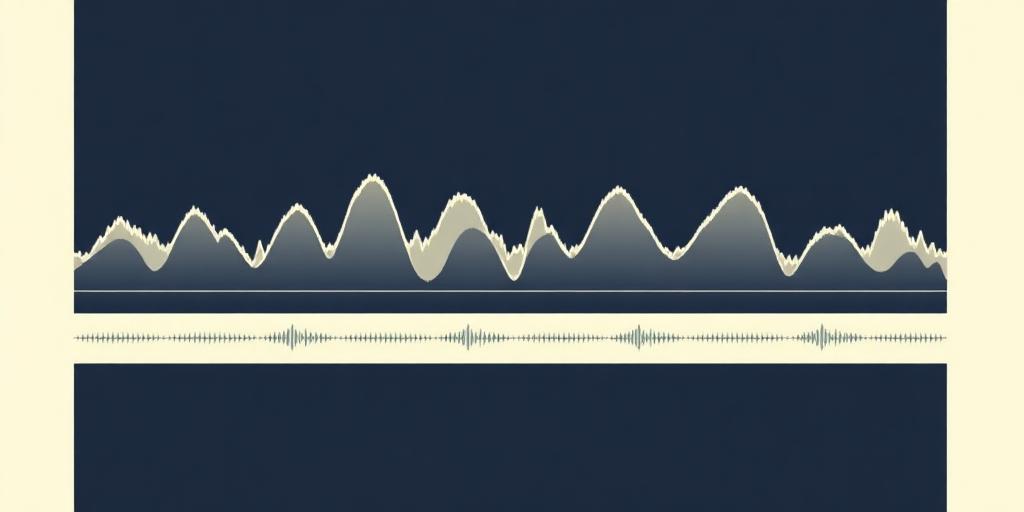

Over five weeks, students build a scoped version of the same pipeline Dr. Dre's research interns are building over four months. They take a real audio clip, run it through speech-to-text, build a tool that finds moments in the transcript where a target topic appears, map those moments back to specific timestamps in the audio, extract a short window around each one, and analyze the speaker's voice in those windows: pitch, pace, pause frequency. They finish with a visualization showing where the topic comes up across the call, mapped against what the speaker's voice was doing at those moments, and a presentation hypothesizing what's interesting in the data.

The pipeline is modular by design. Students build it in pieces, swap components in and out, and compare different approaches. The work mirrors what Dr. Dre's research lab actually does, scoped to what a five-week cohort can ship.

The Mentors

Dr. Andre Martin (Dr. Dre to his students) is an assistant professor at Notre Dame's Mendoza College of Business. He spent fifteen years at Xerox and in defense contracting before a PhD in marketing opened a different question: what can AI reveal about human behavior, language, and persuasion in real business contexts? This summer his students will build the pipeline that powers his ongoing research, processing earnings call audio at scale to surface signals that human listeners would miss. The work is real. The data is real. The pipeline they build will be used.

Dr. Dre is the research sponsor for this lab. The project, the data, and the research direction come from him. An Academy mentor runs day-to-day sessions and works closely with him on the project. Dr. Dre joins key milestones and Demo Day, and stays available to students throughout.

Who This Is For

Python proficiency is required, comfort with functions, libraries, and reading documentation. Familiarity with audio data, speech, or basic machine learning is a plus but not required. The Academy mentor will teach what students need. The right student here likes building things that work end to end, is comfortable with messy real-world data, and finds it interesting that human voices carry information words alone don't. Students who only want to work in clean notebooks with toy datasets will struggle.

Logistics

Five weeks. Mondays, Wednesdays, Fridays, 11:00 AM to 12:15 PM ET. Friday sessions extend to 1:00 PM for Demo Day. Cohorts of 3 to 4 students per mentor. $4,500. Apply by May 11, 2026.

Beyond the live sessions, students work on their own, and they are not alone when they do. The lab is supported by a 24/7 Slack channel and a team of scholars and practitioners at the Academy. Students also work alongside SeqHub's AI co-teacher, which helps them think through problems on off days without doing the work for them. Plan for 10 to 12 hours per week, with 4.5 hours in live sessions and the rest on independent work.